3. Orchestrating 4C Simulations#

Until now, all the simulation models we have used in QUEENS were Python-based. For a lot of us, this is not the case. From fenics to abaqus, we all have our own preferred solvers. One main advantage of QUEENS is its independence of solver type. This means, to use your QUEENS model with your favorite solver, the only thing you have to do is write an interface.

Since QUEENS and the multiphysics solver 4C share some developers, the QUEENS community already provides a ready-to-go interface. So let’s start there.

The 4C model#

In this example, we’ll have a look at a nonlinear solid mechanics problem. The momentum equation is given by

where \(\boldsymbol{u}\) are the displacements, \(\boldsymbol{\sigma}\) the Cauchy stresses, \(\boldsymbol{b}\) the volumetric body forces, and \(\Omega\) the domain of the solid. For the following examples, \(\boldsymbol{b}=\boldsymbol{0}\), and we prescribe Dirichlet boundary conditions

and a Neumann boundary condition:

We assume a static problem, so \(\ddot{\boldsymbol{u}} = 0\), and use a Saint Venant–Kirchhoff material model

where \(\boldsymbol{S}\) is the second Piola-Kirchhoff stress tensor and \(\boldsymbol{E}\) the Green-Lagrange strain tensor with the Lamé parameters

where \(E\) is the Young’s modulus and \(\nu\) the Poisson’s ratio.

The problem is discretized in space using 40 linear finite elements.

Note: This tutorial is based on the 4C tutorial Preconditioners for Iterative Solvers

Note: In the following, we use PyVista for plotting; however, this might not work for some setups. In that case, set

pyvista_availabletoFalsein the next cell, and please refer to the online tutorial in the documentation for figures.

[1]:

# Set this to False if pyvista is causing the kernel to crash

pyvista_available = True

[2]:

# Define some paths

from pathlib import Path

from tutorials.utils import find_repo_root

home = Path.home()

NOTEBOOK_DIR = Path.cwd()

QUEENS_BASE_DIR = find_repo_root(NOTEBOOK_DIR)

QUEENS_EXPERIMENTS_DIR = home / "queens-experiments"

TUTORIAL_DIR = QUEENS_BASE_DIR / "tutorials" / "3_orchestrating_4c_simulations"

[3]:

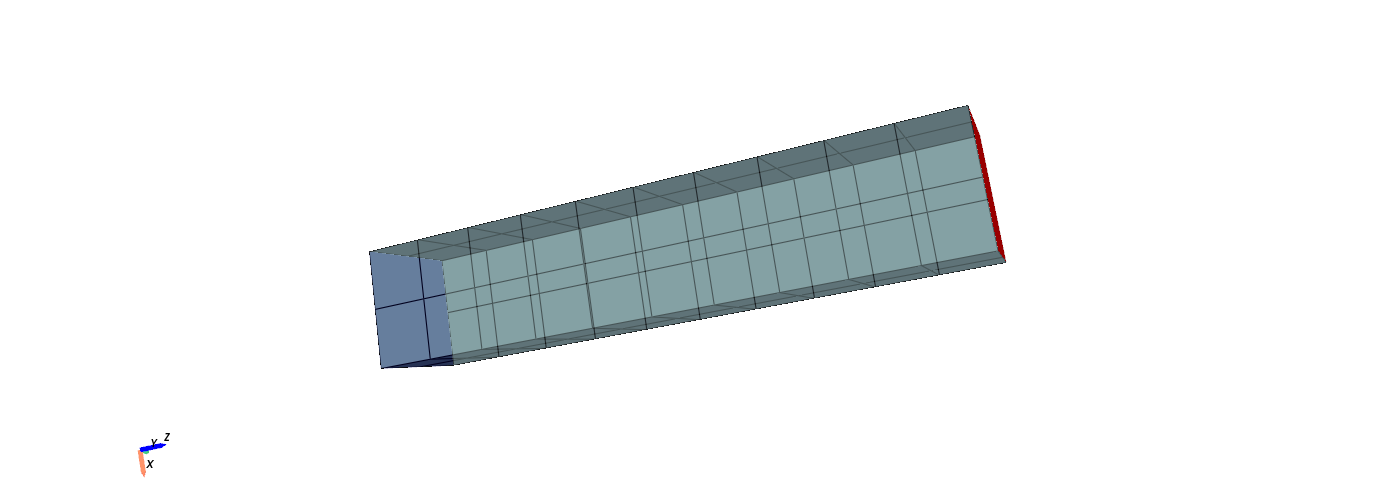

# Let's look at the mesh!

import os

if pyvista_available:

os.environ["PYVISTA_OFF_SCREEN"] = "true"

os.environ["VTK_DEFAULT_RENDER_WINDOW_OFFSCREEN"] = "1"

os.environ["PYVISTA_JUPYTER_BACKEND"] = "static"

import pyvista as pv

fe_mesh = pv.read(TUTORIAL_DIR / "beam_coarse.exo")

pl = pv.Plotter(off_screen=True, title="Finite Element mesh", window_size=(1400, 500))

pl.add_mesh(fe_mesh, show_edges=True, opacity=0.8)

pl.add_mesh(pv.Plane(center=[0, 0, -2.50001], direction=[0, 0, 1]), color="blue") # Gamma_w

pl.add_mesh(pv.Plane(center=[0, 0, 2.50001], direction=[0, 0, 1]), color="red") # Gamma_e

pl.add_axes(line_width=5)

pl.camera_position = [

(1.1321899035097993, -6.851600196807601, 2.7649096132703574),

(0.0, 0.0, 0.275),

(-0.97637930372977, -0.08995062285804697, 0.19644933366041056),

]

pl.show()

The red plane is \(\Gamma_e\), the blue one is \(\Gamma_w\).

Simulation Analytics#

Now, we want to have a look at the Cauchy stresses \(\boldsymbol{\sigma}\) for various Young’s moduli \(E\) and Poisson’s ratios \(\nu\). For the purpose of demonstration, let’s assume a uniform distribution for each material parameter.

Of course we use QUEENS, so let’s get started!

[4]:

from queens.distributions import Uniform

from queens.parameters import Parameters

# Let's define the distributions

young = Uniform(10, 10000)

poisson_ratio = Uniform(0, 0.5)

# Let's define the parameters

parameters = Parameters(young=young, poisson_ratio=poisson_ratio)

Now that we have the parameters ready, we need a Driver. In QUEENS terms, drivers call the underlying simulation models, provide the simulation input and handle logging and error management. They are the interfaces to arbitrary simulation software and essentially do everything that is needed to start a single simulation.

As mentioned before, for 4C, these are already provided by the QUEENS community.

Note: This tutorial assumes a working local 4C installation. Please create a symbolic link to your 4C build directory and store it under

<queens-base-dir>/config/4C_buildvia this command:ln -s <path-to-4C-build-directory> <queens-base-dir>/config/4C_build

[5]:

from queens_interfaces.fourc.driver import Fourc

fourc_executable = QUEENS_BASE_DIR / "config" / "4C_build" / "4C"

input_template = TUTORIAL_DIR / "input_template.4C.yaml"

input_mesh = TUTORIAL_DIR / "beam_coarse.exo"

fourc_driver = Fourc(

parameters=parameters, # parameters to run

input_templates=input_template, # input template

executable=fourc_executable, # 4C executable

files_to_copy=[input_mesh], # copy mesh file to experiment directory

)

4C simulations are controlled via yaml input files. They need to be created for every simulation. Therefore, we provide a jinja2 input template to the driver. Jinja2 is a fast, expressive, and extensible templating engine. It starts from a template, for example

MATERIALS:

- MAT: 1

MAT_Struct_StVenantKirchhoff:

YOUNG: {{ young }}

NUE: {{ poisson_ratio }}

DENS: 1

and inserts the desired values (here: young = 1000 and poisson_ratio = 0.3) into the respective placeholders (here: {{ young }} and {{ poisson_ratio }}). This results in:

MATERIALS:

- MAT: 1

MAT_Struct_StVenantKirchhoff:

YOUNG: 1000

NUE: 0.3

DENS: 1

For 4C, this allows us to create inputs for simulation runs without the need for a 4C API! Let’s run this example!

[6]:

import numpy as np

driver_output = fourc_driver.run(

sample=np.array([1000, 0.3]),

job_id="fourc_young_1000_poisson_ratio_03",

num_procs=1,

experiment_dir=Path.cwd(),

experiment_name="fourc_run",

)

Some information:

sample: is the inputs for which we want to evaluate the model. The order of the data is given by the order in which we define the parameters.job_id: is the identifier for the 4C run and also creates a folder with the same name where the data is stored.num_procs: 4C is called using MPI. This is the number of processes that 4C will be called with.experiment_dir: is the directory where we find all necessary data for 4C (input template or any other required files).experiment_name: is an identifier of a QUEENS run. This will come in handy when we evaluate 4C multiple times.

Let’s look at the output folder structure of the QUEENS run:

fourc_young_1000_poisson_ratio_03/

├── input_file.yaml

├── jobscript.sh

├── metadata.yaml

└── output

├── output.control

├── output.log

├── output.mesh.structure.s0

├── output-structure.pvd

└── output-vtk-files

├── structure-00000-0.vtu

├── structure-00000.pvtu

├── structure-00001-0.vtu

└── structure-00001.pvtu

What are those files?

input_file.yaml: is the 4C input file with \(E=1000\) and \(\nu=0.3\)jobscript.sh: contains the command with which 4C was calledmetadata.yaml: contains metadata of the 4C run (timings, inputs, outputs, …)output-folder: all the files in here are output files of 4C! The sole exception isoutput.log, to which QUEENS redirected the logging data

Go have a look at the yaml, sh and log files.

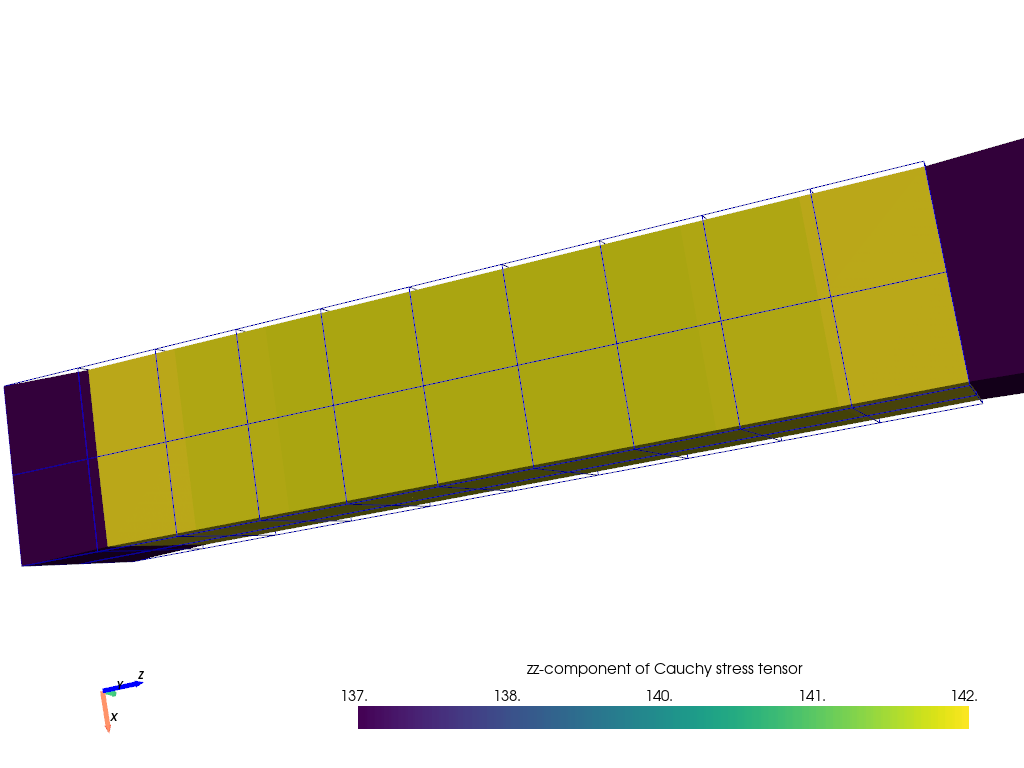

Now, let us look at the Cauchy stresses \(\boldsymbol{\sigma}\).

[7]:

# This is just needed for some nice plots

from plot_results import plot_results

if pyvista_available:

plotter = pv.Plotter()

plot_results(

"fourc_young_1000_poisson_ratio_03/output/output-vtk-files/structure-00001.pvtu",

plotter,

)

plotter.show()

We plotted the deformed mesh. The original mesh is displayed as the blue ‘wireframe’. Let us look into the driver output in Python.

[8]:

print("Driver output:", driver_output)

Driver output: {}

As we can see, it’s {}. But why is it {}?

Well, we told the driver how to run a 4C simulation, but never told it which information we want to extract. For this we need a data processor, i.e., an object that is able to extract data from the simulation results.

[9]:

from queens.data_processors import PvdFile

# Create custom data processor from the available PVD file reader

class CustomDataProcessor(PvdFile):

def __init__(

self,

field_name,

failure_value,

file_name_identifier=None,

files_to_be_deleted_regex_lst=None,

):

super().__init__(

field_name,

file_name_identifier,

file_options_dict={},

files_to_be_deleted_regex_lst=files_to_be_deleted_regex_lst,

point_data=False, # We use cell data

)

self.failure_value = failure_value

def get_data_from_file(self, base_dir_file):

# Get the data

data = super().get_data_from_file(base_dir_file)

# Got some data

if data is not None:

data = data.flatten()

# Simulation started but only the first step was exported

if np.abs(data).sum() < 1e-8:

return self.failure_value

# Return result

return data

# Simulation could not even start

else:

return self.failure_value

custom_data_processor = CustomDataProcessor(

field_name="element_cauchy_stresses_xyz", # Name of the field in the pvtu file

file_name_identifier="output-structure.pvd", # Name of the file in the output folder

failure_value=np.nan * np.ones(40 * 6), # NaN for all elements

)

We have defined our data processor, so let’s continue.

[10]:

# Create a new driver

fourc_driver = Fourc(

parameters=parameters,

input_templates=input_template,

executable=fourc_executable,

data_processor=custom_data_processor, # tell the driver to extract the data

files_to_copy=[input_mesh], # copy mesh file to experiment directory

)

# Run the driver again

driver_output = fourc_driver.run(

sample=np.array([1000, 0.3]),

job_id="fourc_young_1000_poisson_ratio_03",

num_procs=1,

experiment_dir=Path.cwd(),

experiment_name="fourc_run",

)

print("Driver output", driver_output)

print("Key(s) in driver output:", list(driver_output.keys()))

print(

"Number of entries in the \'result\' key:",

len(driver_output["result"]),

"i.e., 40 elements x 6 Cauchy stress values",

)

Driver output {'result': [23.310575607899786, 23.310575607899786, 136.87158285413878, 0.17608189822290854, -8.120652508316304, -8.120652508316343, -0.8703804307951432, -0.8703804307951432, 142.47376188923698, 0.07094729780686747, 1.6044465736013382, 1.604446573601267, -0.3194736081806936, -0.31947360818066484, 142.3310284901062, 0.11705822369646385, 0.22541712867759353, 0.22541712867755204, -0.018423899258083687, -0.01842389925808369, 142.26054986020281, -0.010608294726153116, -0.0031084614595640967, -0.003108461459531618, 0.0037657484222159503, 0.0037657484222063694, 142.2553738655139, -0.00028080448802219736, -0.0028920680026516283, -0.002892068002633175, 0.0037657484222279264, 0.003765748422247088, 142.25537386551392, -0.0002808044880189109, 0.002892068002683122, 0.002892068002706107, -0.018423899258083694, -0.01842389925808369, 142.2605498602028, -0.010608294726147499, 0.003108461459558988, 0.00310846145957219, -0.31947360818075865, -0.31947360818075865, 142.33102849010604, 0.11705822369645978, -0.22541712867758687, -0.2254171286775743, -0.8703804307952198, -0.8703804307952198, 142.47376188923687, 0.07094729780686236, -1.604446573601338, -1.6044465736013127, 23.310575607899757, 23.310575607899757, 136.8715828541387, 0.17608189822290635, 8.1206525083163, 8.120652508316295, 23.310575607899825, 23.310575607899807, 136.87158285413886, -0.17608189822290835, 8.120652508316285, -8.120652508316363, -0.8703804307951646, -0.8703804307951357, 142.47376188923684, -0.0709472978068689, -1.6044465736013551, 1.6044465736012816, -0.3194736081806864, -0.31947360818064807, 142.33102849010623, -0.11705822369646311, -0.2254171286775578, 0.2254171286776073, -0.018423899258098064, -0.018423899258117223, 142.2605498602028, 0.010608294726157085, 0.003108461459566587, -0.003108461459566994, 0.0037657484222135555, 0.0037657484222135542, 142.25537386551392, 0.0002808044880301801, 0.0028920680026720408, -0.0028920680026568646, 0.0037657484222470877, 0.003765748422227926, 142.25537386551395, 0.0002808044880186256, -0.0028920680026543397, 0.0028920680026739065, -0.01842389925804058, -0.018423899258040576, 142.26054986020296, 0.010608294726143346, -0.003108461459555874, 0.0031084614595820086, -0.3194736081807155, -0.3194736081807156, 142.33102849010618, -0.1170582236964608, 0.22541712867758987, -0.22541712867757965, -0.8703804307951646, -0.8703804307951549, 142.47376188923687, -0.07094729780685607, 1.6044465736013354, -1.6044465736013263, 23.310575607899736, 23.31057560789972, 136.87158285413858, -0.17608189822289652, -8.12065250831629, 8.120652508316313, 23.310575607899818, 23.310575607899818, 136.87158285413886, -0.1760818982229085, -8.120652508316327, 8.120652508316319, -0.8703804307952298, -0.870380430795249, 142.4737618892367, -0.0709472978068726, 1.6044465736013271, -1.6044465736013154, -0.3194736081806652, -0.31947360818069404, 142.33102849010623, -0.11705822369646521, 0.2254171286775956, -0.2254171286776278, -0.018423899258110044, -0.01842389925813878, 142.26054986020273, 0.01060829472615785, -0.0031084614595639827, 0.0031084614595864053, 0.0037657484222135542, 0.003765748422213555, 142.25537386551392, 0.0002808044880272362, -0.0028920680026481324, 0.002892068002681941, 0.003765748422213555, 0.003765748422213555, 142.25537386551392, 0.00028080448801934233, 0.002892068002672847, -0.0028920680026665708, -0.018423899258102852, -0.018423899258102856, 142.26054986020287, 0.010608294726144375, 0.00310846145955566, -0.0031084614595663293, -0.31947360818072995, -0.3194736081807491, 142.33102849010618, -0.11705822369645856, -0.22541712867758493, 0.2254171286775792, -0.8703804307952197, -0.8703804307952199, 142.47376188923684, -0.0709472978068567, -1.6044465736013385, 1.6044465736013147, 23.310575607899796, 23.31057560789977, 136.87158285413872, -0.17608189822290024, 8.120652508316285, -8.12065250831631, 23.310575607899803, 23.3105756078998, 136.87158285413884, 0.17608189822291131, 8.120652508316319, 8.12065250831634, -0.8703804307952997, -0.87038043079529, 142.47376188923656, 0.07094729780687398, -1.6044465736012996, -1.6044465736012947, -0.31947360818062925, -0.31947360818061016, 142.33102849010643, 0.11705822369646067, -0.22541712867756097, -0.22541712867757752, -0.01842389925805615, -0.018423899258099258, 142.26054986020281, -0.010608294726155558, 0.0031084614595746464, 0.0031084614595567666, 0.003765748422213555, 0.0037657484222135542, 142.25537386551392, -0.0002808044880198374, 0.0028920680026661943, 0.0028920680026751668, 0.003765748422213555, 0.0037657484222135542, 142.25537386551392, -0.00028080448801903366, -0.0028920680026630155, -0.0028920680026737473, -0.018423899258081296, -0.01842389925807172, 142.26054986020293, -0.010608294726147294, -0.0031084614595606108, -0.0031084614595669746, -0.319473608180682, -0.3194736081807012, 142.33102849010618, 0.11705822369646061, 0.2254171286775861, 0.22541712867757052, -0.8703804307952197, -0.8703804307952197, 142.47376188923684, 0.07094729780686043, 1.6044465736013338, 1.6044465736013271, 23.3105756078997, 23.310575607899683, 136.87158285413858, 0.17608189822290632, -8.120652508316299, -8.120652508316292]}

Key(s) in driver output: ['result']

Number of entries in the 'result' key: 240 i.e., 40 elements x 6 Cauchy stress values

The driver output can contain two keys: ‘result’ and ‘gradient’. The first one is supposed to be the output of the model, and the second one the model gradient.

Note: The gradient value is only available for certain models; this particular 4C model does not provide it.

What we did:

We defined a driver using QUEENS to run a 4C simulation via a Python function call

We provided a custom way of extracting simulation results

Although we selected a single process, setting

num_procs>1allows us to run a simulation in parallel without having to change anything in the interface!

The complexity of working with a sophisticated simulation code (without an API) is hidden from the user!

Instead, it is reduced to fourc_driver.run(<arguments>).

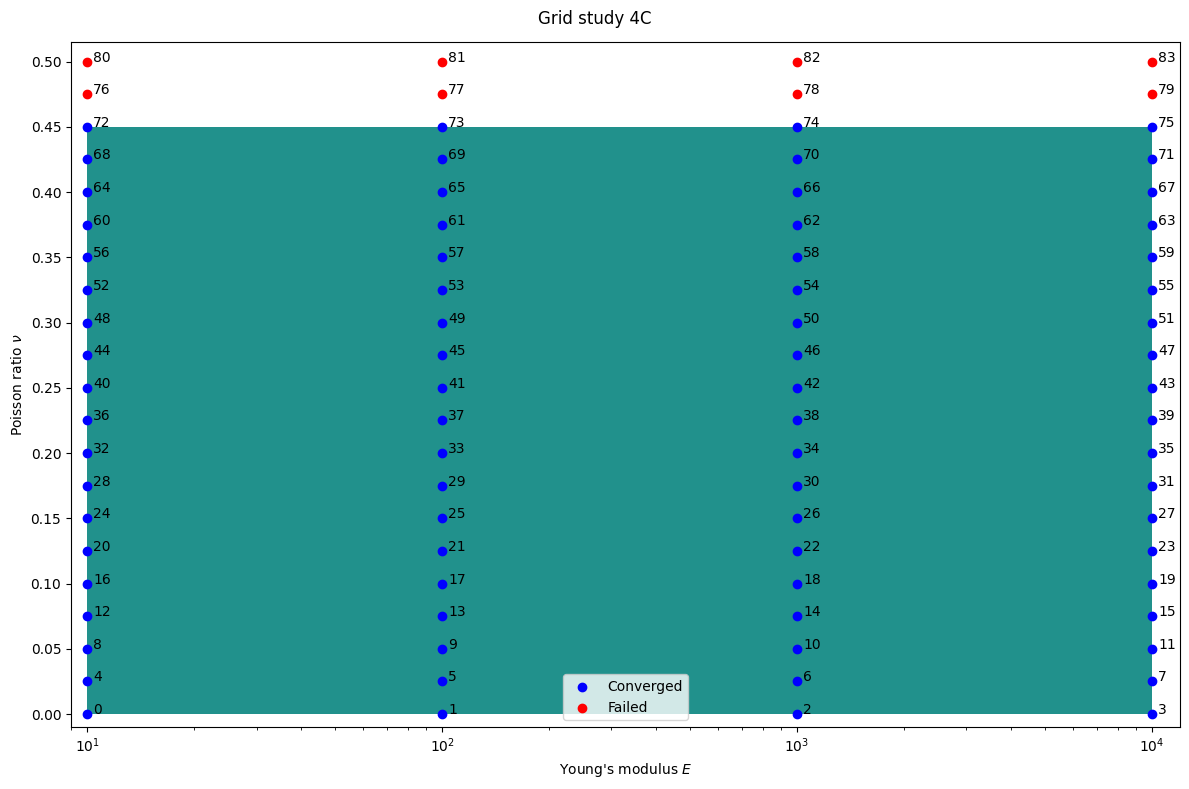

Now in the day-to-day of computational mechanics research, we often want to study convergence of a model depending on certain parameters. In this example, we’ll have a look at parameter combinations of \(E \in [10,10000]\) and \(\nu \in [0,0.5]\) which lead to a failing simulation. Let’s evaluate the 4C model on a grid of \(4 \times 21\) points.

[11]:

# Make it less verbose

import logging

logging.getLogger("distributed").setLevel(logging.WARN)

from queens.schedulers import Local

from queens.iterators import Grid

from queens.models import Simulation

from queens.global_settings import GlobalSettings

from queens.main import run_iterator

from queens.utils.io import load_result

n_grid_points_young = 4

n_grid_points_poisson_ratio = 21

grid_output = None

if __name__ == "__main__":

Path("grid").mkdir(exist_ok=True)

with GlobalSettings(

experiment_name=f"grid_{n_grid_points_young}x{n_grid_points_poisson_ratio}",

output_dir="grid",

) as gs:

scheduler = Local(

gs.experiment_name,

num_jobs=4, # 4 simulations in parallel

num_procs=1, # each simulation uses 1 process

)

model = Simulation(scheduler, fourc_driver)

iterator = Grid(

model=model,

parameters=parameters,

global_settings=gs,

result_description={"write_results": True},

grid_design={

"young": {

"num_grid_points": n_grid_points_young,

"axis_type": "log10",

"data_type": "FLOAT",

},

"poisson_ratio": {

"num_grid_points": n_grid_points_poisson_ratio,

"axis_type": "lin",

"data_type": "FLOAT",

},

},

)

run_iterator(iterator, gs)

grid_output = load_result(gs.result_file("pickle"))

.**.

I I

* *

:. .:

I I

::: .* *. :*:

I * *. .* * I

.: *:*:::: I I ::::*:* :.

:: :I: ::.* *.:: :I: ::

:. * *: .V. .V. :* * .:

:. I :: I*. *I :: I .*

*. :: .* :: :: :* :: *: :* .*

*. I * I :**: I * I .*

*.:. I* ** *I .:.*

*:I :*.* :: *: *.*: I.*

*I: :* .** *I. *: :**

*V *. .*. *II* .*. .* V*

** ..:*I***I*:::: ::::*I***I*:.. **

...... ......

:*IV$$$V*: VV: *VV VVVVVVVVVVVF *VVVVVVVVVVV. .VF. :VI :FV$$$V*:

*$$*:. .:*V$* $$: *$V $$*......... *$I......... .$$$* *$V V$F. .:FV.

V$* *$$. $$: *$V $$: *$F .$$F$V. *$V .$$.

V$F *$V $$: *$V $$: *$I .$$ .V$* *$V F$$*:.

$$: :$$ $$: *$V $$$VVVVVVVV *$$VVVVVVVV: .$$ *$V. *$V .*FV$$V*.

I$F ** *$V $$: *$V $$: *$F .$$ .I$* *$V .*$$*

V$* :V$F$$. I$F V$* $$: *$F .$$ :$$I$V *$$

*$$*:. .:*$$$F F$V*....*V$* $$*......... *$I......... .$$ F$$V V$*: .:V$*

:*IV$$VI*: :I: .*FVVVVF: VVVVVVVVVVVV *VVVVVVVVVVV. .VV :VI :*VV$$VI*.

QUEENS (Quantification of Uncertain Effects in ENgineering Systems):

a Python framework for solver-independent multi-query

analyses of large-scale computational models.

+---------------------------------------------------------------------------------------------------+

| Local |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| self : <queens.schedulers.local.Local object at 0x7f261a7b2190> |

| experiment_name : 'grid_4x21' |

| num_jobs : 4 |

| num_procs : 1 |

| restart_workers : False |

| verbose : True |

| experiment_base_dir : None |

| overwrite_existing_experiment : False |

+---------------------------------------------------------------------------------------------------+

2026-05-08 13:52:34,624 - distributed.http.proxy - INFO - To route to workers diagnostics web server please install jupyter-server-proxy: python -m pip install jupyter-server-proxy

2026-05-08 13:52:34,650 - distributed.scheduler - INFO - State start

2026-05-08 13:52:34,654 - distributed.scheduler - INFO - Scheduler at: tcp://127.0.0.1:32769

2026-05-08 13:52:34,655 - distributed.scheduler - INFO - dashboard at: http://127.0.0.1:8787/status

2026-05-08 13:52:34,656 - distributed.scheduler - INFO - Registering Worker plugin shuffle

2026-05-08 13:52:34,671 - distributed.nanny - INFO - Start Nanny at: 'tcp://127.0.0.1:43847'

2026-05-08 13:52:34,676 - distributed.nanny - INFO - Start Nanny at: 'tcp://127.0.0.1:40979'

2026-05-08 13:52:34,680 - distributed.nanny - INFO - Start Nanny at: 'tcp://127.0.0.1:42419'

2026-05-08 13:52:34,685 - distributed.nanny - INFO - Start Nanny at: 'tcp://127.0.0.1:44749'

2026-05-08 13:52:35,362 - distributed.worker - INFO - Start worker at: tcp://127.0.0.1:37571

2026-05-08 13:52:35,363 - distributed.worker - INFO - Listening to: tcp://127.0.0.1:37571

2026-05-08 13:52:35,363 - distributed.worker - INFO - Worker name: 0

2026-05-08 13:52:35,363 - distributed.worker - INFO - dashboard at: 127.0.0.1:34037

2026-05-08 13:52:35,363 - distributed.worker - INFO - Waiting to connect to: tcp://127.0.0.1:32769

2026-05-08 13:52:35,363 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,363 - distributed.worker - INFO - Threads: 1

2026-05-08 13:52:35,364 - distributed.worker - INFO - Memory: 3.90 GiB

2026-05-08 13:52:35,364 - distributed.worker - INFO - Local Directory: /tmp/dask-scratch-space/worker-2odq7z9j

2026-05-08 13:52:35,364 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,379 - distributed.worker - INFO - Start worker at: tcp://127.0.0.1:34861

2026-05-08 13:52:35,379 - distributed.worker - INFO - Listening to: tcp://127.0.0.1:34861

2026-05-08 13:52:35,379 - distributed.worker - INFO - Worker name: 2

2026-05-08 13:52:35,379 - distributed.worker - INFO - dashboard at: 127.0.0.1:43781

2026-05-08 13:52:35,379 - distributed.worker - INFO - Waiting to connect to: tcp://127.0.0.1:32769

2026-05-08 13:52:35,379 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,379 - distributed.worker - INFO - Threads: 1

2026-05-08 13:52:35,380 - distributed.worker - INFO - Memory: 3.90 GiB

2026-05-08 13:52:35,380 - distributed.worker - INFO - Local Directory: /tmp/dask-scratch-space/worker-95bd7o9j

2026-05-08 13:52:35,381 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,392 - distributed.worker - INFO - Start worker at: tcp://127.0.0.1:33531

2026-05-08 13:52:35,392 - distributed.worker - INFO - Listening to: tcp://127.0.0.1:33531

2026-05-08 13:52:35,392 - distributed.worker - INFO - Worker name: 1

2026-05-08 13:52:35,392 - distributed.worker - INFO - dashboard at: 127.0.0.1:35209

2026-05-08 13:52:35,392 - distributed.worker - INFO - Waiting to connect to: tcp://127.0.0.1:32769

2026-05-08 13:52:35,392 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,392 - distributed.worker - INFO - Threads: 1

2026-05-08 13:52:35,392 - distributed.worker - INFO - Memory: 3.90 GiB

2026-05-08 13:52:35,392 - distributed.worker - INFO - Local Directory: /tmp/dask-scratch-space/worker-ttb_kliy

2026-05-08 13:52:35,392 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,403 - distributed.worker - INFO - Start worker at: tcp://127.0.0.1:38495

2026-05-08 13:52:35,404 - distributed.worker - INFO - Listening to: tcp://127.0.0.1:38495

2026-05-08 13:52:35,405 - distributed.worker - INFO - Worker name: 3

2026-05-08 13:52:35,406 - distributed.worker - INFO - dashboard at: 127.0.0.1:37549

2026-05-08 13:52:35,407 - distributed.worker - INFO - Waiting to connect to: tcp://127.0.0.1:32769

2026-05-08 13:52:35,408 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,409 - distributed.worker - INFO - Threads: 1

2026-05-08 13:52:35,410 - distributed.worker - INFO - Memory: 3.90 GiB

2026-05-08 13:52:35,411 - distributed.worker - INFO - Local Directory: /tmp/dask-scratch-space/worker-coasu6pa

2026-05-08 13:52:35,412 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,677 - distributed.scheduler - INFO - Register worker <WorkerState 'tcp://127.0.0.1:37571', name: 0, status: init, memory: 0, processing: 0>

2026-05-08 13:52:35,681 - distributed.worker - INFO - Starting Worker plugin shuffle

2026-05-08 13:52:35,682 - distributed.scheduler - INFO - Starting worker compute stream, tcp://127.0.0.1:37571

2026-05-08 13:52:35,682 - distributed.worker - INFO - Registered to: tcp://127.0.0.1:32769

2026-05-08 13:52:35,682 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,684 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:40960

2026-05-08 13:52:35,685 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:32769

2026-05-08 13:52:35,701 - distributed.scheduler - INFO - Register worker <WorkerState 'tcp://127.0.0.1:33531', name: 1, status: init, memory: 0, processing: 0>

2026-05-08 13:52:35,702 - distributed.scheduler - INFO - Starting worker compute stream, tcp://127.0.0.1:33531

2026-05-08 13:52:35,703 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:40984

2026-05-08 13:52:35,703 - distributed.worker - INFO - Starting Worker plugin shuffle

2026-05-08 13:52:35,704 - distributed.worker - INFO - Registered to: tcp://127.0.0.1:32769

2026-05-08 13:52:35,704 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,705 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:32769

2026-05-08 13:52:35,706 - distributed.scheduler - INFO - Register worker <WorkerState 'tcp://127.0.0.1:34861', name: 2, status: init, memory: 0, processing: 0>

2026-05-08 13:52:35,707 - distributed.scheduler - INFO - Starting worker compute stream, tcp://127.0.0.1:34861

2026-05-08 13:52:35,708 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:40970

2026-05-08 13:52:35,708 - distributed.worker - INFO - Starting Worker plugin shuffle

2026-05-08 13:52:35,709 - distributed.worker - INFO - Registered to: tcp://127.0.0.1:32769

2026-05-08 13:52:35,709 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,711 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:32769

2026-05-08 13:52:35,737 - distributed.scheduler - INFO - Register worker <WorkerState 'tcp://127.0.0.1:38495', name: 3, status: init, memory: 0, processing: 0>

2026-05-08 13:52:35,738 - distributed.scheduler - INFO - Starting worker compute stream, tcp://127.0.0.1:38495

2026-05-08 13:52:35,739 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:40998

2026-05-08 13:52:35,739 - distributed.worker - INFO - Starting Worker plugin shuffle

2026-05-08 13:52:35,740 - distributed.worker - INFO - Registered to: tcp://127.0.0.1:32769

2026-05-08 13:52:35,740 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:52:35,741 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:32769

2026-05-08 13:52:35,791 - distributed.scheduler - INFO - Receive client connection: Client-2b325515-4ae5-11f1-8a6d-da6364bbeb6f

2026-05-08 13:52:35,792 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:41012

To view the Dask dashboard open this link in your browser: http://127.0.0.1:8787/status

+---------------------------------------------------------------------------------------------------+

| Simulation |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| self : <queens.models.simulation.Simulation object at 0x7f261ac983d0> |

| scheduler : <queens.schedulers.local.Local object at 0x7f261a7b2190> |

| driver : <queens_interfaces.fourc.driver.Fourc object at 0x7f264292d550> |

+---------------------------------------------------------------------------------------------------+

+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Grid |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - |

| self : <queens.iterators.grid.Grid object at 0x7f2619ebff90> |

| model : <queens.models.simulation.Simulation object at 0x7f261ac983d0> |

| parameters : <queens.parameters.parameters.Parameters object at 0x7f26f441e650> |

| global_settings : <queens.global_settings.GlobalSettings object at 0x7f2619ea7d10> |

| result_description : {'write_results': True} |

| grid_design : {'young': {'num_grid_points': 4, 'axis_type': 'log10', 'data_type': 'FLOAT'}, 'poisson_ratio': {'num_grid_points': 21, 'axis_type': 'lin', 'data_type': 'FLOAT'}} |

+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

+---------------------------------------------------------------------------------------------------+

| git information |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| commit hash : 694e30c5df1772a57d6a18f2e62c1605cbf007c8 |

| branch : main |

| clean working tree : False |

+---------------------------------------------------------------------------------------------------+

Grid for experiment: grid_4x21

Starting Analysis...

95%|█████████▌| 80/84 [00:22<00:01, 2.74it/s]The file '/github/home/queens-experiments/grid_4x21/81/output/output-structure.pvd' does not exist!

96%|█████████▋| 81/84 [00:24<00:02, 1.44it/s]The file '/github/home/queens-experiments/grid_4x21/80/output/output-structure.pvd' does not exist!

The file '/github/home/queens-experiments/grid_4x21/82/output/output-structure.pvd' does not exist!

99%|█████████▉| 83/84 [00:24<00:00, 2.21it/s]The file '/github/home/queens-experiments/grid_4x21/83/output/output-structure.pvd' does not exist!

100%|██████████| 84/84 [00:24<00:00, 2.67it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 0 - 83 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 84 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 2.492e+01s |

| average time per parallel job : 1.187e+00s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 84/84 [00:24<00:00, 3.37it/s]

Time for CALCULATION: 24.946449756622314 s

2026-05-08 13:53:00,899 - distributed.scheduler - INFO - Retire worker addresses (0, 1, 2, 3)

2026-05-08 13:53:00,900 - distributed.nanny - INFO - Closing Nanny at 'tcp://127.0.0.1:43847'. Reason: nanny-close

2026-05-08 13:53:00,901 - distributed.nanny - INFO - Nanny asking worker to close. Reason: nanny-close

2026-05-08 13:53:00,901 - distributed.nanny - INFO - Closing Nanny at 'tcp://127.0.0.1:40979'. Reason: nanny-close

2026-05-08 13:53:00,902 - distributed.nanny - INFO - Nanny asking worker to close. Reason: nanny-close

2026-05-08 13:53:00,902 - distributed.worker - INFO - Stopping worker at tcp://127.0.0.1:37571. Reason: nanny-close

2026-05-08 13:53:00,902 - distributed.worker - INFO - Removing Worker plugin shuffle

2026-05-08 13:53:00,904 - distributed.nanny - INFO - Closing Nanny at 'tcp://127.0.0.1:42419'. Reason: nanny-close

2026-05-08 13:53:00,904 - distributed.core - INFO - Connection to tcp://127.0.0.1:32769 has been closed.

2026-05-08 13:53:00,904 - distributed.worker - INFO - Stopping worker at tcp://127.0.0.1:33531. Reason: nanny-close

2026-05-08 13:53:00,905 - distributed.worker - INFO - Removing Worker plugin shuffle

2026-05-08 13:53:00,905 - distributed.nanny - INFO - Nanny asking worker to close. Reason: nanny-close

2026-05-08 13:53:00,906 - distributed.nanny - INFO - Worker closed

2026-05-08 13:53:00,906 - distributed.nanny - INFO - Closing Nanny at 'tcp://127.0.0.1:44749'. Reason: nanny-close

2026-05-08 13:53:00,907 - distributed.core - INFO - Connection to tcp://127.0.0.1:32769 has been closed.

2026-05-08 13:53:00,907 - distributed.worker - INFO - Stopping worker at tcp://127.0.0.1:34861. Reason: nanny-close

2026-05-08 13:53:00,907 - distributed.worker - INFO - Removing Worker plugin shuffle

2026-05-08 13:53:00,909 - distributed.nanny - INFO - Nanny asking worker to close. Reason: nanny-close

2026-05-08 13:53:00,909 - distributed.nanny - INFO - Worker closed

2026-05-08 13:53:00,910 - distributed.core - INFO - Connection to tcp://127.0.0.1:32769 has been closed.

2026-05-08 13:53:00,912 - distributed.worker - INFO - Stopping worker at tcp://127.0.0.1:38495. Reason: nanny-close

2026-05-08 13:53:00,914 - distributed.nanny - INFO - Worker closed

2026-05-08 13:53:00,918 - distributed.core - INFO - Received 'close-stream' from tcp://127.0.0.1:40960; closing.

2026-05-08 13:53:00,913 - distributed.worker - INFO - Removing Worker plugin shuffle

2026-05-08 13:53:00,917 - distributed.core - INFO - Connection to tcp://127.0.0.1:32769 has been closed.

2026-05-08 13:53:00,921 - distributed.nanny - INFO - Worker closed

2026-05-08 13:53:00,921 - distributed.core - INFO - Received 'close-stream' from tcp://127.0.0.1:40984; closing.

2026-05-08 13:53:00,922 - distributed.core - INFO - Received 'close-stream' from tcp://127.0.0.1:40970; closing.

2026-05-08 13:53:00,927 - distributed.scheduler - INFO - Remove worker <WorkerState 'tcp://127.0.0.1:37571', name: 0, status: closing, memory: 0, processing: 0> (stimulus_id='handle-worker-cleanup-1778248380.9272087')

2026-05-08 13:53:00,928 - distributed.scheduler - INFO - Remove worker <WorkerState 'tcp://127.0.0.1:33531', name: 1, status: closing, memory: 0, processing: 0> (stimulus_id='handle-worker-cleanup-1778248380.928558')

2026-05-08 13:53:00,929 - distributed.scheduler - INFO - Remove worker <WorkerState 'tcp://127.0.0.1:34861', name: 2, status: closing, memory: 0, processing: 0> (stimulus_id='handle-worker-cleanup-1778248380.9296565')

2026-05-08 13:53:00,933 - distributed.core - INFO - Received 'close-stream' from tcp://127.0.0.1:40998; closing.

2026-05-08 13:53:00,935 - distributed.scheduler - INFO - Remove worker <WorkerState 'tcp://127.0.0.1:38495', name: 3, status: closing, memory: 0, processing: 0> (stimulus_id='handle-worker-cleanup-1778248380.935196')

2026-05-08 13:53:00,938 - distributed.scheduler - INFO - Lost all workers

2026-05-08 13:53:01,374 - distributed.scheduler - INFO - Closing scheduler. Reason: unknown

2026-05-08 13:53:01,375 - distributed.scheduler - INFO - Scheduler closing all comms

[12]:

# Let's plot the data

grid_points = grid_output["input_data"]

cauchy_stresses = grid_output["raw_output_data"]["result"]

import matplotlib.pyplot as plt

converged_samples = []

failed_samples = []

failed = []

for input_sample, cauchy_output in zip(grid_points, cauchy_stresses, strict=True):

if np.isnan(cauchy_output).any():

failed_samples.append(input_sample)

failed.append(1)

else:

converged_samples.append(input_sample)

failed.append(0)

converged_samples = np.array(converged_samples)

failed_samples = np.array(failed_samples)

failed = np.array(failed)

fig, ax = plt.subplots(figsize=(12, 8))

ax.contourf(

grid_points[:, 0].reshape(21, 4),

grid_points[:, 1].reshape(21, 4),

failed.reshape(21, 4),

levels=[0, 0.000001],

)

for i, gp in enumerate(grid_points):

plt.text(gp[0] * 1.04, gp[1], i)

ax.scatter(converged_samples[:, 0], converged_samples[:, 1], c="b", label="Converged")

ax.scatter(failed_samples[:, 0], failed_samples[:, 1], c="r", label="Failed")

ax.set_xlim(10 * 0.9, 10000 * 1.2)

ax.set_yticks(np.linspace(0, 0.5, 11))

ax.set_ylim(-0.01, 0.515)

ax.set_xlabel("Young's modulus $E$")

ax.set_xscale("log")

ax.set_ylabel("Poisson ratio $\\nu$")

fig.suptitle("Grid study 4C")

ax.legend()

fig.tight_layout()

plt.show()

Each point represents one simulation, i.e., one 4C run with different parameters. The number next to it is the job id, so the unique identifier for this simulation for QUEENS. The color of the points:

blue: The simulation ran through and returned a value.

red: The simulation failed and NaN was returned.

A colored background indicates the region of the parameter space leading to converging results.

Previously, we called 4C using the driver directly. However, when using QUEENS iterators, this won’t work anymore. Instead, we need a Model. The difference is that a driver handles a single simulation, whereas a model accepts a list of relevant input samples / configurations.

When working with drivers, the Simulation model is used. Besides a driver, this requires a Scheduler. As the name suggests, schedulers schedule evaluations of the driver. The scheduler also handles the evaluation of multiple driver calls at the same time. Keep in mind that each driver call can itself be parallelized with MPI, such that QUEENS natively supports nested parallelism!

Since we are doing our computations locally, we use a Local scheduler. However, there is also a Cluster scheduler which is able to submit jobs on high performance clusters using SLURM, PBS etc.

The scheduler also creates the required folder structure for an experiment. The default value is set to ~/queens-experiments/<experiment_name>. For the grid iterator, it looks like this:

grid_4x21/

├── 0

├── 1

...

├── 80

├── 81

├── 82

├── 83

├── 9

└── input_template.4C.yaml

Each number is a job with its output:

10

├── input_file.yaml

├── jobscript.sh

├── metadata.yaml

└── output

├── output.control

├── output.log

├── output.mesh.structure.s0

├── output-structure.pvd

└── output-vtk-files

├── structure-00000-0.vtu

├── structure-00000.pvtu

├── structure-00001-0.vtu

└── structure-00001.pvtu

Once more, have a look at the files. In particular look at the log files of the failed simulations.

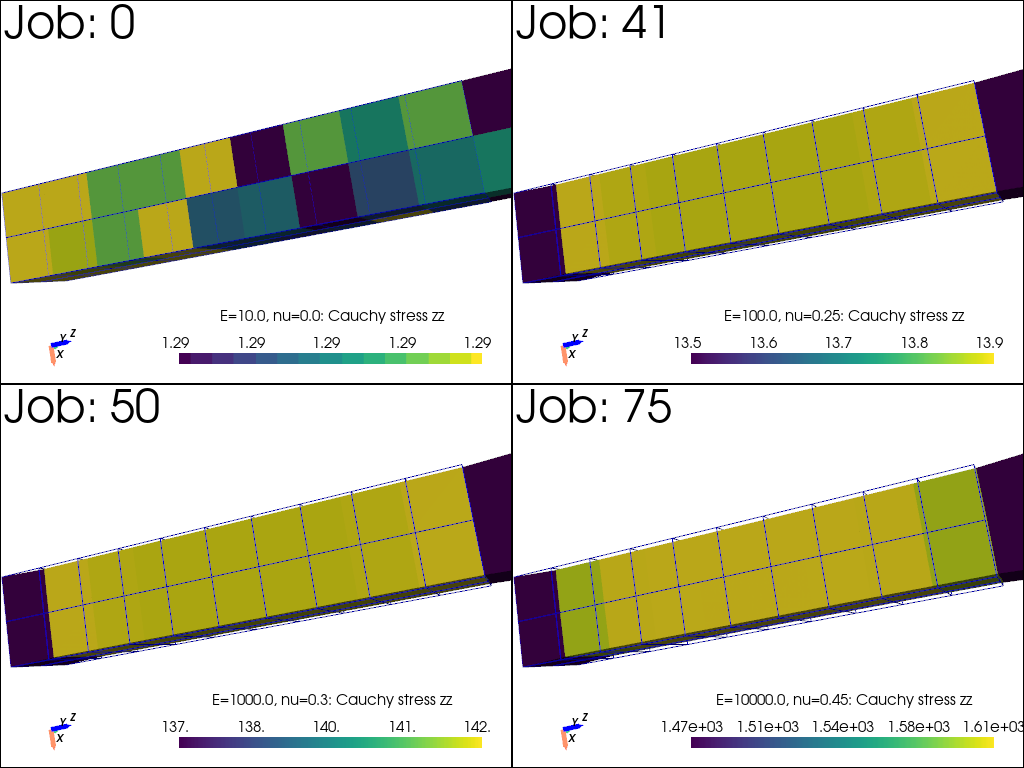

[13]:

# Let's look at some simulation outputs!

if pyvista_available:

job_ids = [0, 41, 50, 75]

plotter = pv.Plotter(shape=(2, 2))

for i in range(2):

for j in range(2):

plotter.subplot(i, j)

job_id = job_ids[i * 2 + j]

file_path = QUEENS_EXPERIMENTS_DIR / f"grid_4x21/{job_id}/output/output-vtk-files/structure-00001.pvtu"

plotter.add_text(f"Job: {job_id}")

plot_results(file_path, plotter, f"E={grid_points[job_id][0]}, nu={np.round(grid_points[job_id][1],decimals=4)}: Cauchy stress zz\n",)

plotter.show()

In summary, we employed QUEENS to do some simulation analytics of a 4C model. We identified failed simulations, looked at the outputs, and extracted data.

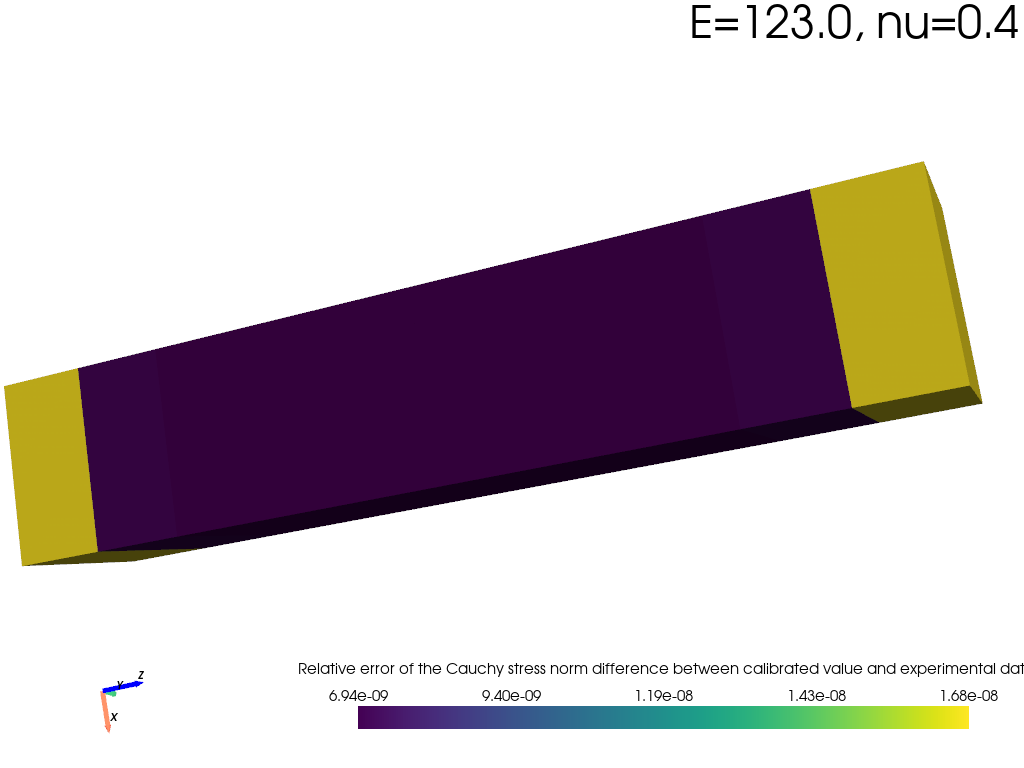

Calibration#

Now, let’s imagine we have some experimental measurements of the Cauchy stresses at the element centers \(\boldsymbol{\sigma}_\text{ex}\) of a real-life cantilever. We can now use this to calibrate our parameters \(E\) and \(\nu\) from experiment.

Note: We assume that the 4C model is able to predict the real-word accurately, i.e, we have the right boundary conditions, constitutive law etc.

To do so, we’ll use deterministic optimization to minimize the difference between simulated and measured stresses:

where \(\boldsymbol{\sigma}_e(E,\nu)\) are the elementwise stresses obtained from the 4C model evaluated at \(E, \nu\), where the subscript \(e\) is the element id.

Before we start the optimization, we need to change our parameter space. As we previously identified, the simulation model does not converge for \(\nu > 0.45\). So in order to make meaningful predictions, let’s change the parameter space.

[14]:

# Reduced parameter space for the poisson ratio

poisson_ratio = Uniform(0, 0.45)

parameters = Parameters(young=young, poisson_ratio=poisson_ratio)

[15]:

# Let's implement the loss function

from queens.models._model import Model

class LossFunction(Model):

def __init__(self, experimental_cauchy_stresses, forward_model):

self.experimental_cauchy_stresses = experimental_cauchy_stresses

self.forward_model = forward_model

super().__init__()

def _evaluate(self, samples):

element_cauchy_stresses = self.forward_model.evaluate(samples)["result"]

difference = element_cauchy_stresses - np.tile(

self.experimental_cauchy_stresses, (len(samples), 1)

)

squared_norm = (difference**2).sum(axis=1) + (difference[:, 3:] ** 2).sum(

axis=1

) # the output is only a symmetrical tensor, so we have to add the offdiagonal terms once more

squared_norm /= 40 # 40 elements

return {"result": squared_norm.reshape(-1)}

def grad(self, samples, upstream_gradient):

pass

from experimental_data import experimental_elementwise_cauchy_stress

Ok, now let’s set up the optimization using the L-BFGS-B algorithm.

[16]:

from scipy.optimize import Bounds

from queens.iterators import Optimization

optimum = None

if __name__ == "__main__":

Path("optimization").mkdir(exist_ok=True)

with GlobalSettings(

experiment_name="optimization_young_poisson_ratio", output_dir="optimization"

) as gs:

scheduler = Local(

gs.experiment_name,

num_jobs=4, # 4 simulations in parallel

num_procs=1, # each simulation uses 1 process

)

custom_data_processor = CustomDataProcessor(

field_name="element_cauchy_stresses_xyz", # Name of the field in the pvtu file

file_name_identifier="output-structure.pvd", # Name of the file in the output folder

failure_value=np.nan * np.ones(40 * 6), # NaN for all elements

files_to_be_deleted_regex_lst=[

"output.control",

"*.mesh.s0",

"output-vtk-files/*",

], # Delete these files after we've extracted the data to save storage space

)

fourc_driver = Fourc(

parameters=parameters,

input_templates=input_template,

executable=fourc_executable,

data_processor=custom_data_processor, # tell the driver to extract the data

files_to_copy=[input_mesh], # copy mesh file to experiment directory

)

model = Simulation(scheduler, fourc_driver)

loss_function = LossFunction(experimental_elementwise_cauchy_stress, model)

optimization = Optimization(

loss_function,

parameters,

gs,

initial_guess=[200, 0.4],

result_description={"write_results": True},

objective_and_jacobian=True,

bounds=Bounds([10, 0], [10000, 0.45]),

algorithm="L-BFGS-B",

max_feval=1000,

)

run_iterator(optimization, gs)

optimum = optimization.solution.x

.**.

I I

* *

:. .:

I I

::: .* *. :*:

I * *. .* * I

.: *:*:::: I I ::::*:* :.

:: :I: ::.* *.:: :I: ::

:. * *: .V. .V. :* * .:

:. I :: I*. *I :: I .*

*. :: .* :: :: :* :: *: :* .*

*. I * I :**: I * I .*

*.:. I* ** *I .:.*

*:I :*.* :: *: *.*: I.*

*I: :* .** *I. *: :**

*V *. .*. *II* .*. .* V*

** ..:*I***I*:::: ::::*I***I*:.. **

...... ......

:*IV$$$V*: VV: *VV VVVVVVVVVVVF *VVVVVVVVVVV. .VF. :VI :FV$$$V*:

*$$*:. .:*V$* $$: *$V $$*......... *$I......... .$$$* *$V V$F. .:FV.

V$* *$$. $$: *$V $$: *$F .$$F$V. *$V .$$.

V$F *$V $$: *$V $$: *$I .$$ .V$* *$V F$$*:.

$$: :$$ $$: *$V $$$VVVVVVVV *$$VVVVVVVV: .$$ *$V. *$V .*FV$$V*.

I$F ** *$V $$: *$V $$: *$F .$$ .I$* *$V .*$$*

V$* :V$F$$. I$F V$* $$: *$F .$$ :$$I$V *$$

*$$*:. .:*$$$F F$V*....*V$* $$*......... *$I......... .$$ F$$V V$*: .:V$*

:*IV$$VI*: :I: .*FVVVVF: VVVVVVVVVVVV *VVVVVVVVVVV. .VV :VI :*VV$$VI*.

QUEENS (Quantification of Uncertain Effects in ENgineering Systems):

a Python framework for solver-independent multi-query

analyses of large-scale computational models.

+---------------------------------------------------------------------------------------------------+

| Local |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| self : <queens.schedulers.local.Local object at 0x7f26f445ed90> |

| experiment_name : 'optimization_young_poisson_ratio' |

| num_jobs : 4 |

| num_procs : 1 |

| restart_workers : False |

| verbose : True |

| experiment_base_dir : None |

| overwrite_existing_experiment : False |

+---------------------------------------------------------------------------------------------------+

2026-05-08 13:53:02,887 - distributed.scheduler - INFO - State start

2026-05-08 13:53:02,892 - distributed.scheduler - INFO - Scheduler at: tcp://127.0.0.1:44923

2026-05-08 13:53:02,893 - distributed.scheduler - INFO - dashboard at: http://127.0.0.1:8787/status

2026-05-08 13:53:02,895 - distributed.scheduler - INFO - Registering Worker plugin shuffle

2026-05-08 13:53:02,912 - distributed.nanny - INFO - Start Nanny at: 'tcp://127.0.0.1:45605'

2026-05-08 13:53:02,914 - distributed.nanny - INFO - Start Nanny at: 'tcp://127.0.0.1:33717'

2026-05-08 13:53:02,917 - distributed.nanny - INFO - Start Nanny at: 'tcp://127.0.0.1:36001'

2026-05-08 13:53:02,924 - distributed.nanny - INFO - Start Nanny at: 'tcp://127.0.0.1:38437'

2026-05-08 13:53:03,557 - distributed.worker - INFO - Start worker at: tcp://127.0.0.1:45137

2026-05-08 13:53:03,557 - distributed.worker - INFO - Listening to: tcp://127.0.0.1:45137

2026-05-08 13:53:03,557 - distributed.worker - INFO - Worker name: 1

2026-05-08 13:53:03,557 - distributed.worker - INFO - dashboard at: 127.0.0.1:34543

2026-05-08 13:53:03,557 - distributed.worker - INFO - Waiting to connect to: tcp://127.0.0.1:44923

2026-05-08 13:53:03,557 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,557 - distributed.worker - INFO - Threads: 1

2026-05-08 13:53:03,557 - distributed.worker - INFO - Memory: 3.90 GiB

2026-05-08 13:53:03,557 - distributed.worker - INFO - Local Directory: /tmp/dask-scratch-space/worker-2aklpdf0

2026-05-08 13:53:03,557 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,568 - distributed.worker - INFO - Start worker at: tcp://127.0.0.1:42273

2026-05-08 13:53:03,568 - distributed.worker - INFO - Listening to: tcp://127.0.0.1:42273

2026-05-08 13:53:03,569 - distributed.worker - INFO - Worker name: 2

2026-05-08 13:53:03,569 - distributed.worker - INFO - dashboard at: 127.0.0.1:34485

2026-05-08 13:53:03,569 - distributed.worker - INFO - Waiting to connect to: tcp://127.0.0.1:44923

2026-05-08 13:53:03,569 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,569 - distributed.worker - INFO - Threads: 1

2026-05-08 13:53:03,569 - distributed.worker - INFO - Memory: 3.90 GiB

2026-05-08 13:53:03,569 - distributed.worker - INFO - Local Directory: /tmp/dask-scratch-space/worker-h1r8duj8

2026-05-08 13:53:03,570 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,572 - distributed.worker - INFO - Start worker at: tcp://127.0.0.1:45147

2026-05-08 13:53:03,572 - distributed.worker - INFO - Listening to: tcp://127.0.0.1:45147

2026-05-08 13:53:03,572 - distributed.worker - INFO - Worker name: 0

2026-05-08 13:53:03,572 - distributed.worker - INFO - dashboard at: 127.0.0.1:36087

2026-05-08 13:53:03,573 - distributed.worker - INFO - Waiting to connect to: tcp://127.0.0.1:44923

2026-05-08 13:53:03,573 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,573 - distributed.worker - INFO - Threads: 1

2026-05-08 13:53:03,573 - distributed.worker - INFO - Memory: 3.90 GiB

2026-05-08 13:53:03,573 - distributed.worker - INFO - Local Directory: /tmp/dask-scratch-space/worker-w1lujp2_

2026-05-08 13:53:03,573 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,578 - distributed.worker - INFO - Start worker at: tcp://127.0.0.1:44177

2026-05-08 13:53:03,578 - distributed.worker - INFO - Listening to: tcp://127.0.0.1:44177

2026-05-08 13:53:03,578 - distributed.worker - INFO - Worker name: 3

2026-05-08 13:53:03,578 - distributed.worker - INFO - dashboard at: 127.0.0.1:33495

2026-05-08 13:53:03,578 - distributed.worker - INFO - Waiting to connect to: tcp://127.0.0.1:44923

2026-05-08 13:53:03,578 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,578 - distributed.worker - INFO - Threads: 1

2026-05-08 13:53:03,578 - distributed.worker - INFO - Memory: 3.90 GiB

2026-05-08 13:53:03,579 - distributed.worker - INFO - Local Directory: /tmp/dask-scratch-space/worker-1t55b8vs

2026-05-08 13:53:03,580 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,804 - distributed.scheduler - INFO - Register worker <WorkerState 'tcp://127.0.0.1:45137', name: 1, status: init, memory: 0, processing: 0>

2026-05-08 13:53:03,807 - distributed.worker - INFO - Starting Worker plugin shuffle

2026-05-08 13:53:03,808 - distributed.worker - INFO - Registered to: tcp://127.0.0.1:44923

2026-05-08 13:53:03,808 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,808 - distributed.scheduler - INFO - Starting worker compute stream, tcp://127.0.0.1:45137

2026-05-08 13:53:03,809 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:50658

2026-05-08 13:53:03,810 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:44923

2026-05-08 13:53:03,814 - distributed.scheduler - INFO - Register worker <WorkerState 'tcp://127.0.0.1:42273', name: 2, status: init, memory: 0, processing: 0>

2026-05-08 13:53:03,815 - distributed.scheduler - INFO - Starting worker compute stream, tcp://127.0.0.1:42273

2026-05-08 13:53:03,816 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:50664

2026-05-08 13:53:03,816 - distributed.worker - INFO - Starting Worker plugin shuffle

2026-05-08 13:53:03,818 - distributed.scheduler - INFO - Register worker <WorkerState 'tcp://127.0.0.1:45147', name: 0, status: init, memory: 0, processing: 0>

2026-05-08 13:53:03,817 - distributed.worker - INFO - Registered to: tcp://127.0.0.1:44923

2026-05-08 13:53:03,817 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,817 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:44923

2026-05-08 13:53:03,819 - distributed.scheduler - INFO - Starting worker compute stream, tcp://127.0.0.1:45147

2026-05-08 13:53:03,820 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:50678

2026-05-08 13:53:03,820 - distributed.worker - INFO - Starting Worker plugin shuffle

2026-05-08 13:53:03,821 - distributed.worker - INFO - Registered to: tcp://127.0.0.1:44923

2026-05-08 13:53:03,821 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,821 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:44923

2026-05-08 13:53:03,832 - distributed.scheduler - INFO - Register worker <WorkerState 'tcp://127.0.0.1:44177', name: 3, status: init, memory: 0, processing: 0>

2026-05-08 13:53:03,832 - distributed.scheduler - INFO - Starting worker compute stream, tcp://127.0.0.1:44177

2026-05-08 13:53:03,833 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:50684

2026-05-08 13:53:03,833 - distributed.worker - INFO - Starting Worker plugin shuffle

2026-05-08 13:53:03,834 - distributed.worker - INFO - Registered to: tcp://127.0.0.1:44923

2026-05-08 13:53:03,834 - distributed.worker - INFO - -------------------------------------------------

2026-05-08 13:53:03,835 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:44923

2026-05-08 13:53:03,855 - distributed.scheduler - INFO - Receive client connection: Client-3bec7e0a-4ae5-11f1-8a6d-da6364bbeb6f

2026-05-08 13:53:03,856 - distributed.core - INFO - Starting established connection to tcp://127.0.0.1:50700

To view the Dask dashboard open this link in your browser: http://127.0.0.1:8787/status

+---------------------------------------------------------------------------------------------------+

| Simulation |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| self : <queens.models.simulation.Simulation object at 0x7f260ff5e3d0> |

| scheduler : <queens.schedulers.local.Local object at 0x7f26f445ed90> |

| driver : <queens_interfaces.fourc.driver.Fourc object at 0x7f260ede4f90> |

+---------------------------------------------------------------------------------------------------+

+---------------------------------------------------------------------------------------------------+

| Optimization |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| self : <queens.iterators.optimization.Optimization object at 0x7f260ed06090> |

| model : <__main__.LossFunction object at 0x7f260ed0a390> |

| parameters : <queens.parameters.parameters.Parameters object at 0x7f260ff87590> |

| global_settings : <queens.global_settings.GlobalSettings object at 0x7f260ed7ead0> |

| initial_guess : [200, 0.4] |

| result_description : {'write_results': True} |

| verbose_output : False |

| bounds : Bounds(array([10, 0]), array([1.0e+04, 4.5e-01])) |

| constraints : None |

| max_feval : 1000 |

| algorithm : 'L-BFGS-B' |

| jac_method : '2-point' |

| jac_rel_step : None |

| objective_and_jacobian : True |

+---------------------------------------------------------------------------------------------------+

+---------------------------------------------------------------------------------------------------+

| git information |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| commit hash : 694e30c5df1772a57d6a18f2e62c1605cbf007c8 |

| branch : main |

| clean working tree : False |

+---------------------------------------------------------------------------------------------------+

Optimization for experiment: optimization_young_poisson_ratio

Starting Analysis...

Initialize Optimization run.

Welcome to Optimization core run.

67%|██████▋ | 2/3 [00:02<00:00, 1.03it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 0 - 2 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 2.283e+00s |

| average time per parallel job : 2.283e+00s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:02<00:00, 1.31it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[200. 0.4]

100%|██████████| 3/3 [00:01<00:00, 1.45it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 3 - 5 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 1.955e+00s |

| average time per parallel job : 1.955e+00s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:01<00:00, 1.53it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[192.96485958 0. ]

33%|███▎ | 1/3 [00:00<00:01, 1.19it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 6 - 8 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 8.705e-01s |

| average time per parallel job : 8.705e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.44it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[192.63505091 0. ]

33%|███▎ | 1/3 [00:00<00:01, 1.10it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 9 - 11 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.729e-01s |

| average time per parallel job : 9.729e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.08it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[191.31581623 0. ]

33%|███▎ | 1/3 [00:00<00:01, 1.15it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 12 - 14 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.593e-01s |

| average time per parallel job : 9.593e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.12it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[186.03887752 0. ]

33%|███▎ | 1/3 [00:00<00:01, 1.27it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 15 - 17 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 8.640e-01s |

| average time per parallel job : 8.640e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.47it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[143.06999551 0. ]

33%|███▎ | 1/3 [00:00<00:01, 1.14it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 18 - 20 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.076e-01s |

| average time per parallel job : 9.076e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.30it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[1.42356700e+02 2.24250186e-02]

33%|███▎ | 1/3 [00:00<00:01, 1.12it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 21 - 23 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.126e-01s |

| average time per parallel job : 9.126e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.28it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[1.39503519e+02 1.12125093e-01]

33%|███▎ | 1/3 [00:00<00:01, 1.28it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 24 - 26 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 8.727e-01s |

| average time per parallel job : 8.727e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.43it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[128.75638866 0.45 ]

100%|██████████| 3/3 [00:00<00:00, 3.79it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 27 - 29 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.705e-01s |

| average time per parallel job : 9.705e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.09it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[138.33707662 0.14879642]

100%|██████████| 3/3 [00:01<00:00, 3.57it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 30 - 32 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 1.018e+00s |

| average time per parallel job : 1.018e+00s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:01<00:00, 2.94it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[129.76606369 0.45 ]

33%|███▎ | 1/3 [00:00<00:01, 1.22it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 33 - 35 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 8.664e-01s |

| average time per parallel job : 8.664e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.46it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[133.82708486 0.3072871 ]

33%|███▎ | 1/3 [00:00<00:01, 1.19it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 36 - 38 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 8.771e-01s |

| average time per parallel job : 8.771e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.42it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[116.36545562 0.45 ]

33%|███▎ | 1/3 [00:00<00:01, 1.20it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 39 - 41 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.076e-01s |

| average time per parallel job : 9.076e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.30it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[129.56218769 0.34214386]

33%|███▎ | 1/3 [00:00<00:01, 1.23it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 42 - 44 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 8.717e-01s |

| average time per parallel job : 8.717e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.44it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[120.31397294 0.45 ]

33%|███▎ | 1/3 [00:00<00:01, 1.19it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 45 - 47 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 8.714e-01s |

| average time per parallel job : 8.714e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.44it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[125.75154629 0.38658499]

100%|██████████| 3/3 [00:00<00:00, 3.66it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 48 - 50 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.963e-01s |

| average time per parallel job : 9.963e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.01it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[120.31406965 0.40743052]

33%|███▎ | 1/3 [00:00<00:01, 1.15it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 51 - 53 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.698e-01s |

| average time per parallel job : 9.698e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.09it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[123.12252429 0.39666381]

33%|███▎ | 1/3 [00:00<00:01, 1.23it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 54 - 56 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 8.883e-01s |

| average time per parallel job : 8.883e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.37it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[122.77541081 0.40501085]

33%|███▎ | 1/3 [00:00<00:01, 1.09it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 57 - 59 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.577e-01s |

| average time per parallel job : 9.577e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.13it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[122.98363053 0.40000379]

33%|███▎ | 1/3 [00:00<00:01, 1.26it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 60 - 62 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 8.664e-01s |

| average time per parallel job : 8.664e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.46it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[122.99965387 0.40000297]

33%|███▎ | 1/3 [00:00<00:01, 1.16it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 63 - 65 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.076e-01s |

| average time per parallel job : 9.076e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.30it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[123.00002037 0.39999982]

33%|███▎ | 1/3 [00:00<00:01, 1.14it/s]

+---------------------------------------------------------------------------------------------------+

| Batch summary for jobs 66 - 68 |

|- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -|

| number of jobs : 3 |

| number of parallel jobs : 4 |

| number of procs : 1 |

| total elapsed time : 9.557e-01s |

| average time per parallel job : 9.557e-01s |

+---------------------------------------------------------------------------------------------------+

100%|██████████| 3/3 [00:00<00:00, 3.14it/s]

The intermediate, iterated parameters ['young', 'poisson_ratio'] are:

[122.9999999 0.39999999]

Optimization took 2.375447E+01 seconds.

The optimum:

[122.9999999 0.39999999]

Time for CALCULATION: 23.76087260246277 s

2026-05-08 13:53:27,772 - distributed.scheduler - INFO - Retire worker addresses (0, 1, 2, 3)

2026-05-08 13:53:27,773 - distributed.nanny - INFO - Closing Nanny at 'tcp://127.0.0.1:45605'. Reason: nanny-close

2026-05-08 13:53:27,774 - distributed.nanny - INFO - Nanny asking worker to close. Reason: nanny-close

2026-05-08 13:53:27,775 - distributed.nanny - INFO - Closing Nanny at 'tcp://127.0.0.1:33717'. Reason: nanny-close

2026-05-08 13:53:27,775 - distributed.worker - INFO - Stopping worker at tcp://127.0.0.1:45147. Reason: nanny-close

2026-05-08 13:53:27,776 - distributed.nanny - INFO - Nanny asking worker to close. Reason: nanny-close

2026-05-08 13:53:27,775 - distributed.worker - INFO - Removing Worker plugin shuffle

2026-05-08 13:53:27,777 - distributed.core - INFO - Connection to tcp://127.0.0.1:44923 has been closed.

2026-05-08 13:53:27,777 - distributed.nanny - INFO - Closing Nanny at 'tcp://127.0.0.1:36001'. Reason: nanny-close

2026-05-08 13:53:27,779 - distributed.nanny - INFO - Nanny asking worker to close. Reason: nanny-close

2026-05-08 13:53:27,780 - distributed.nanny - INFO - Closing Nanny at 'tcp://127.0.0.1:38437'. Reason: nanny-close

2026-05-08 13:53:27,778 - distributed.worker - INFO - Stopping worker at tcp://127.0.0.1:45137. Reason: nanny-close

2026-05-08 13:53:27,778 - distributed.worker - INFO - Removing Worker plugin shuffle

2026-05-08 13:53:27,778 - distributed.nanny - INFO - Worker closed

2026-05-08 13:53:27,779 - distributed.core - INFO - Connection to tcp://127.0.0.1:44923 has been closed.

2026-05-08 13:53:27,780 - distributed.worker - INFO - Stopping worker at tcp://127.0.0.1:42273. Reason: nanny-close

2026-05-08 13:53:27,781 - distributed.worker - INFO - Removing Worker plugin shuffle

2026-05-08 13:53:27,782 - distributed.nanny - INFO - Worker closed

2026-05-08 13:53:27,783 - distributed.nanny - INFO - Nanny asking worker to close. Reason: nanny-close

2026-05-08 13:53:27,782 - distributed.core - INFO - Connection to tcp://127.0.0.1:44923 has been closed.

2026-05-08 13:53:27,784 - distributed.nanny - INFO - Worker closed

2026-05-08 13:53:27,787 - distributed.core - INFO - Received 'close-stream' from tcp://127.0.0.1:50678; closing.

2026-05-08 13:53:27,790 - distributed.core - INFO - Received 'close-stream' from tcp://127.0.0.1:50658; closing.

2026-05-08 13:53:27,791 - distributed.core - INFO - Received 'close-stream' from tcp://127.0.0.1:50664; closing.

2026-05-08 13:53:27,791 - distributed.scheduler - INFO - Remove worker <WorkerState 'tcp://127.0.0.1:45147', name: 0, status: closing, memory: 0, processing: 0> (stimulus_id='handle-worker-cleanup-1778248407.791692')

2026-05-08 13:53:27,790 - distributed.worker - INFO - Stopping worker at tcp://127.0.0.1:44177. Reason: nanny-close

2026-05-08 13:53:27,790 - distributed.worker - INFO - Removing Worker plugin shuffle

2026-05-08 13:53:27,792 - distributed.core - INFO - Connection to tcp://127.0.0.1:44923 has been closed.

2026-05-08 13:53:27,796 - distributed.scheduler - INFO - Remove worker <WorkerState 'tcp://127.0.0.1:45137', name: 1, status: closing, memory: 0, processing: 0> (stimulus_id='handle-worker-cleanup-1778248407.7959676')

2026-05-08 13:53:27,797 - distributed.scheduler - INFO - Remove worker <WorkerState 'tcp://127.0.0.1:42273', name: 2, status: closing, memory: 0, processing: 0> (stimulus_id='handle-worker-cleanup-1778248407.7973413')

2026-05-08 13:53:27,797 - distributed.nanny - INFO - Worker closed

2026-05-08 13:53:27,803 - distributed.core - INFO - Received 'close-stream' from tcp://127.0.0.1:50684; closing.

2026-05-08 13:53:27,804 - distributed.scheduler - INFO - Remove worker <WorkerState 'tcp://127.0.0.1:44177', name: 3, status: closing, memory: 0, processing: 0> (stimulus_id='handle-worker-cleanup-1778248407.8044417')

2026-05-08 13:53:27,805 - distributed.scheduler - INFO - Lost all workers

2026-05-08 13:53:28,269 - distributed.scheduler - INFO - Closing scheduler. Reason: unknown

2026-05-08 13:53:28,270 - distributed.scheduler - INFO - Scheduler closing all comms

[17]:

# Let's do a forward simulation of the optimal parameters:

fourc_driver.data_processor = None

fourc_driver.run(

optimum,

experiment_dir=Path.cwd(),

job_id="calibrated_value",

num_procs=1,

experiment_name=None,

)

def tensor_norm(tensor_data):

data = np.hstack(

(tensor_data.tolist(), tensor_data[:, 3:].tolist())

) # duplicate offdiagonal terms

return np.sqrt((data**2).sum(axis=1)).reshape(-1, 1)

def plot_tensor_norm(output_mesh, field_name, data, plotter, color_bar_title):

output_mesh[field_name] = data

plotter.add_mesh(

output_mesh,